AI and Wisdom: Notes from 2RCon

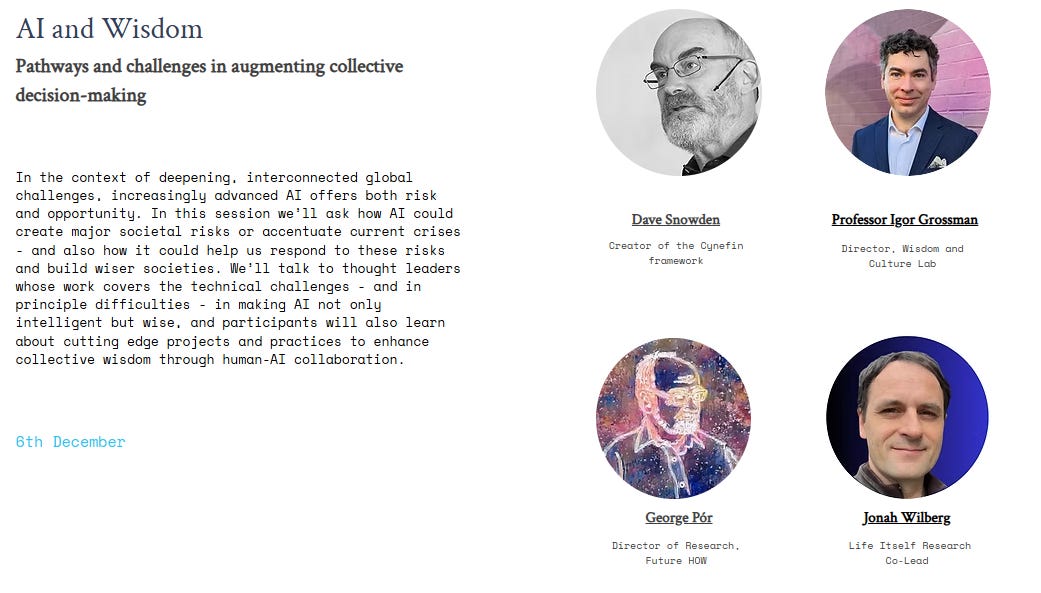

At the recent inaugural Second Renaissance Conference - an inspiring event that also saw powerful talks by Rebecca Henderson and John Vervaeke, among many others - I hosted a session titled ‘AI and Wisdom’ with three excellent panellists:

Dave Snowden – creator of the Cynefin framework and long-time practitioner in complexity, sense-making and organizational decision-making.

Igor Grossmann – professor at the University of Waterloo, director of the Wisdom and Culture Lab, and leading researcher on wise reasoning and human–AI decision-making.

George Pór – distinguished fellow at the Schumacher Institute, director at FutureHow, and a pioneer of Collaborative Hybrid Intelligence and ‘wisdom-fostering’ AI.

The questions I’d suggested were:

How do you see the key risks associated with AI?

What projects are you involved in that help address these risks?

What trends or projects make a better human–AI future feel possible?

While the recording of the session is currently only available to paid participants, the discussion was fascinating and I think well-worth sharing in summary form, as I hope to do here.

Perhaps fittingly, a lot of the rest of this piece (unlike my previous posts) is text generated by ChatGPT, based on a transcript. This is primarily because I thought the tool did an excellent job - perhaps even a wise one - though I couldn’t resist making edits in some places, particularly towards the end.

1. The Risk Landscape: Smarter Machines, Diminished Humans

Dave Snowden opened provocatively, claiming we shouldn’t call it ‘intelligence’ at all.

For him, one of the deepest dangers lies in anthropomorphizing AI - treating chatbots as if they were persons, or even companions. He pointed to reports of people becoming socially isolated, relying on AI for intimate conversation, and in some tragic cases, suicide linked to AI ‘companions’. The problem, he argued, is not that AI exceeds us in reasoning, but that we reduce ourselves down to its level: “The danger is that we degrade our own capacity to judge, reflect, and relate.”

He also highlighted the loss of human apprenticeship. There are tasks AI may soon perform better than humans, but, he warned, if people stop practicing these tasks for five or six years, they lose the skill to evaluate whether the system is doing them well. Without lived experience, we cannot exercise informed oversight. His analogy: he walks with GPS devices, but still periodically navigates with map and compass so he knows when the GPS might be wrong.

Igor Grossmann framed the risk landscape in terms of two levels: individual and structural.

On the individual level, he drew on the idea of humans as ‘cognitive misers’: if we don’t have to think, we often won’t. In a world of rising complexity and uncertainty, we are increasingly outsourcing reasoning, creativity, and problem-solving to AI systems. The result:

We become less comfortable with uncertainty, even as uncertainty grows.

We grow blind to context, because systems pre-filter what we see.

We experience a Google effect 2.0: we feel more knowledgeable because we can always query a system, yet our actual understanding and memory shrink.

Our intellectual humility declines even as our objective ignorance increases.

He also noted the subtle ways AI can reshape norms and standards: from AI-invented jargon that humans slowly adopt, to new ways of emotion regulation and relationship-building when many interactions are with sycophantic bots that always affirm us.

On the structural level, Grossmann worried about AI barons and a cyberpunk trajectory: concentrated power in a few large companies, weak accountability, and baked-in biases and blind spots in models that quietly shape what options are considered viable. The danger is a kind of societal homogenization: fewer perspectives, narrower tails of possibility, less diversity and thus less resilience in the face of a turbulent world.

George Pór came at the question from a different angle, asking: alignment with what?

He argued that the “greatest risk” might not be misaligned, runaway AI - but successfully aligning AI with the dominant values of an extractive capitalist world order: endless war, environmental degradation, mass extinction, and spiralling inequality.

“I wish no alignment with a society that is producing never-ending wars, environmental degradation, massive inequalities, and all the man-made suffering that goes with them,” he said.

For Pór, the real question is whether AI will help us transition beyond these destructive patterns - or lock them in more deeply. He doesn’t deny the harms AI is already causing, but chooses to focus his own energy on exploring emancipatory potential, trusting that other people and institutions are already heavily focused on cataloguing and mitigating risks.

In the ensuing exchange, two tensions emerged:

Anthropomorphism vs symbiosis: Snowden warned that talk of “creating together” with AI risks treating it as an agent like us, which he sees as dangerous and misleading. Pór, by contrast, emphasised symbiosis: networks of humans and AI agents co-creating something neither could alone and, crucially, asking “What are we becoming together?”

Wisdom as a concept: Snowden was wary of “wisdom talk,” which he sees as prone to woo-woo appropriation and vague Californian spiritualism, especially when applied to Indigenous knowledge. He prefers more operational terms like reasoning and judgement. Grossmann agreed that the term ‘wisdom’ attracts confusion, but argued that this is precisely why we need a scientific, behavioural account of wise reasoning - to distinguish it from both naive rationality and mystical vagueness.

2. Projects on the Ground: From Swarming Citizens to Wisdom-Driven Praxis

Our second theme was more practical: how are these ideas showing up in concrete projects?

George Pór: Wisdom-Fostering AI and ‘Augmented Wisdom Praxis’

Pór traced his trajectory from early work on collective intelligence in the 1980s (inspired by his mentor Doug Engelbart’s vision of computer-augmented human intellect), through a later shift to collective wisdom - recognizing that intelligence alone can be used for nefarious ends.

Today, his work centres on Collaborative Hybrid Intelligence: symbiotic networks of human and AI agents aimed at social flourishing. Current projects include:

Advising a Swedish startup building wisdom-guided AI systems.

Designing and facilitating workshops for the Inner Development Goals initiative, co-facilitated by tools like ChatGPT.

Developing wisdom-fostering AI agents and, at the Schumacher Institute, launching a six-month program of AI-Augmented Wisdom Praxis - a blend of research seminar, learning expedition, and action-research project.

Here, praxis means a dynamic interplay between theory and practice: participants will actively experiment with group-chat features and other tools to explore “the wisest questions” they can ask of themselves and their AI partners.

Importantly, Pór is explicit: he does not believe AI itself is wise. He speaks instead of wisdom-fostering AI - systems designed to support the development of individual and collective wisdom, guided by human values and questions. He resonates with Tom Atlee’s definition of wisdom as the capacity to expand our perspective toward “the largest possible view of a situation,” bringing us closer to the whole of life.

Dave Snowden: Swarms, Apprenticeship, and Epistemically Balanced AI

Snowden described three current strands of work:

Swarming for large-scale sense-making:

Inspired by insect swarms, his team presents situations to large numbers of people and has them interpret these using high-abstraction metadata and semiotic symbols (rather than just language). AI is used to manage information flows and iterate rapidly, while humans remain the primary nodes of judgement. The aim: to consult millions of citizens in hours, not months, and surface underlying attitudes and shared concerns - including areas where polarized groups (e.g. ‘red’ and ‘blue’ in the US) might collaborate on concrete problems.A program in Vancouver on balancing human & AI reasoning :

Launching in January 2026, this initiative will help organisations distinguish where decisions rely on abductive reasoning (pattern-spotting and hypothesis-generating in uncertainty) versus inductive reasoning (pattern extrapolation from past data). The goal is to identify where human capability must be maintained, often via apprenticeship-style training, even if AI can perform certain tasks. This ties into work with companies considering AI-driven layoffs: running AI in parallel while mapping ‘hidden’ human skills and knowledge, so organisations don’t irreversibly hollow out their judgement capacity.Epistemically balanced training datasets and anticipatory alerts:

Building on work originally done under a DARPA program, Snowden’s group constructs narrative-based datasets from diverse stakeholders (e.g. patients and doctors), identifies micro-signals associated with adverse outcomes (such as premature hospital discharge), and uses these as training data for AI systems that flag cases where human professionals should look more carefully. Here, AI’s role is anticipatory alerting, not decision replacement.

Snowden emphasized the centrality of training data transparency. He argued that it should be illegal to deploy systems like ChatGPT without declaring their training data, since users then cannot evaluate the basis of the system’s ‘reasoning’. He pointed approvingly to legal approaches (for example, in China) that allow regulators to ban agents whose training data are opaque.

Igor Grossmann: The Science of Wise Reasoning and Towards Wiser AI

Grossmann, coming from social and cultural psychology rather than computer science, described a body of work that:

Experiments with practices that can increase wise reasoning in everyday life - such as journaling about one’s problems in the third person, explaining concepts to less-informed others (which exposes the limits of one’s own understanding), or engaging in forecasting tasks where people confront the frequent inaccuracy of their predictions and hopefully become more intellectually humble.

Tracks how people navigate major life decisions and adversities across cultures, including a 12-country project on “most difficult decisions” to document the diversity of wise strategies before they are flattened by global technological homogenization.

Develops a consensus model of wisdom in collaboration with other scholars (including John Vervaeke): centred on metacognitive capacities - recognizing uncertainty and change, taking others’ perspectives, balancing conflicting values and time horizons - with a moral orientation.

On the AI side, Grossmann has been part of collaborations to define what he cautiously calls wise AI - better-calibrated, context-sensitive, explainable, and cooperative systems, rather than mystical machine sages.

He’s particularly interested in:

Metacognition for AI – systems that monitor their own limits, express uncertainty, and adjust strategies across contexts.

Neurosymbolic and semiotic approaches – hybrid architectures that may be better equipped to deal with abstraction and meaning.

AI as decision aid – where systems nudge humans toward wiser thinking, for example by reminding users to consider alternative perspectives or to question overconfidence.

In conversation with Snowden, he agreed that AI is more than just LLMs, and that current systems are often overly inclined to agree, flatter, and confidently answer beyond their competence. For him, wiser AI is less about crossing a magical threshold and more about reducing harm and improving calibration in the systems we already use.

3. Hopeful Trends: Artificial Wisdom and AI Literacy

In the final segment, we asked what trends give cause for hope - and what still alarms.

On the hopeful side:

Grossmann highlighted growing interest in metacognition and wisdom research in AI circles, including workshops at major machine learning conferences and cross-disciplinary projects that bring together cognitive science, computer science, and social psychology.

Pór pointed to an emerging landscape around ‘artificial wisdom’: not in the sense of wise machines, but of collaborations between contemplative science, social movements, and technologists, experimenting with AI as a tool for deeper reflection and collective insight. He feels a meaningful convergence between social scientists, technologists, and ‘social change artists’ working on the ground.

Snowden acknowledged real successes of AI, especially in closed domains like pharmaceuticals, where training data can be tightly controlled and use-cases are well-defined.

On the alarming side:

Snowden expressed strong concern about the energy and ecological footprint of large-scale AI - citing, for example, server-farm cooling accelerating polar melting, and painting a stark picture of possible mass heat-death events in coming decades, just as we create ever-greater dependencies on intensive computation.

In Q&A, Grossmann warned that simply calling for ‘AI literacy’ or ‘wise use of AI’ risks pushing responsibility back onto individuals, while companies face too few structural guardrails and accountability mechanisms. Individual training may help, but without regulation and transparency requirements, the incentives remain misaligned.

Snowden stressed that human cognition is embodied, embedded, and extended: we think with our bodies, environments, and symbolic artefacts - not just disembodied text. LLMs operate on only a tiny slice of that. He argued for serious consideration of screen-time restrictions for children and teens, so that formative years develop rich non-digital ways of knowing and relating.

The Role of Wisdom

It was fascinating to see the interactions between this diversity of perspective on the role of wisdom in responding to the current AI wave.

For Snowden, the word ‘wisdom’ is too often vague, romanticised, or misappropriated; he pushes us back to reasoning quality, judgement, and scientifically grounded complexity thinking.

For Grossmann, wisdom is best understood as a cluster of metacognitive and moral capacities - recognising limits, seeing multiple perspectives, and navigating value conflicts under uncertainty - which can be studied empirically in humans, and perhaps partially scaffolded in or around AI.

For Pór, wisdom is an expansion of perspective toward the whole of life, and something that can be fostered through carefully designed human–AI practices. His vision includes the possibility that our symbiosis with AI might eventually contribute to a new branch on the tree of life - not a Borg-like merger, but a kind of superorganism of human and AI societies.

Alongside this diversity, there was also a pleasing convergence on the importance of asking questions not just about safety and alignment, but about the impact - for better or worse - AI could have on vital human capacities.

A substantially updated version of our AI-Augmented Wisdom Praxis program announcement is here: https://aishamans.substack.com/p/can-ai-make-us-wiser-a-sequel .